Engineering asynchronous closing loops for $10k deals: A deterministic approach to sales automation

The era of synchronous B2B sales is mathematically obsolete. Relying on biological assets—sales representatives—to manually qualify, pitch, and negotiate $10...

Table of Contents

- The biological bottleneck: Why synchronous B2B sales architectures fail

- Redefining sales automation as an event-driven state machine

- Data layer and identity resolution: Architecting the pre-qualification matrix

- Engineering the asynchronous validation loop with n8n and LLM orchestration

- Designing idempotent APIs for financial and legal commitments

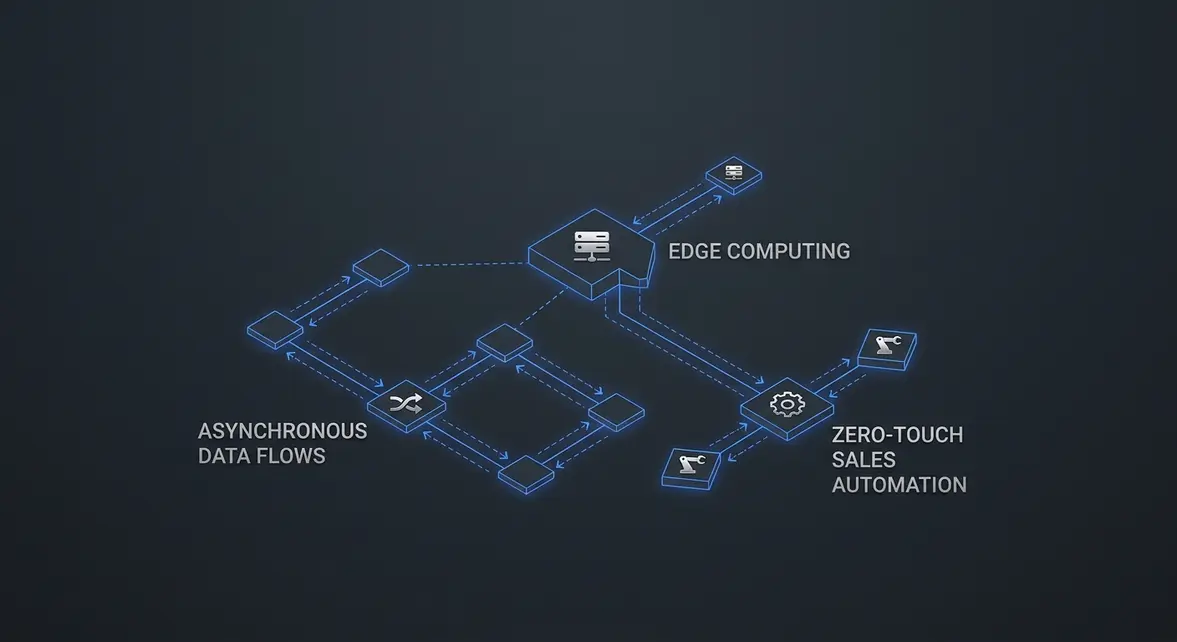

- Zero-touch operations: Decoupling human interaction from the closing sequence

- Security and edge execution in multi-tenant sales environments

- Scaling MRR: Measuring throughput and latency in your revenue engine

The biological bottleneck: Why synchronous B2B sales architectures fail

In the context of 2026 growth engineering, a B2B sales funnel is fundamentally a data pipeline. When you map the architecture of a traditional closing mechanism, the most glaring inefficiency is the biological component. Human sales representatives operate as high-latency, error-prone nodes that throttle system throughput. By relying on synchronous communication to close $10k deals, organizations introduce a critical vulnerability that degrades both margin and scalability.

The Latency of Synchronous Scheduling

Every time a prospect is forced to book a discovery call, the pipeline experiences artificial latency. The back-and-forth of calendar alignment, time zone calculations, and no-show rescheduling introduces delays measured in days, not milliseconds. In a highly optimized system, time-to-close is a primary metric. Synchronous sales architectures inherently fail because they force asynchronous buyer intent into a rigid, synchronous schedule. When a buyer is ready to convert at 2:00 AM on a Sunday, forcing them to wait for a Tuesday afternoon Zoom call results in a measurable drop in conversion probability.

Subjective Qualification and CPA Inflation

Beyond latency, human nodes introduce subjective data processing. Traditional Sales Development Representatives (SDRs) and Account Executives (AEs) qualify leads based on mood, fatigue, and cognitive bias. This subjectivity leads to false positives—wasting expensive human hours on unqualified prospects—and false negatives, where high-intent buyers are discarded. This inefficiency directly drives up Cost Per Acquisition (CPA). When you factor in base salaries, commissions, and the operational overhead of managing a synchronous team, the unit economics of a $10k deal begin to collapse.

Deploying rigorous Sales Automation eliminates this biological bottleneck by replacing subjective human judgment with deterministic AI logic.

| Pipeline Architecture | Processing Latency | Qualification Logic | CPA Impact |

|---|---|---|---|

| Traditional Synchronous | 48-120 hours | Subjective / Bias-prone | +40-60% (Base + Commission) |

| Asynchronous AI Loop | <200ms | Deterministic / Algorithmic | Fixed API Compute Cost |

Engineering the Human Out of the Loop

To scale $10k deal acquisition, human intervention must be treated as a system failure rather than a standard operating procedure. By deploying advanced n8n workflows, we can route buyer intent data through LLM-powered qualification nodes that execute instantly. Instead of a human AE manually reviewing a CRM record, an automated webhook triggers a background process that enriches the lead data, scores the intent using a custom algorithm, and dynamically generates a personalized, asynchronous closing asset—all within seconds.

When you remove the biological bottleneck, you stop paying for idle time and subjective errors. The architecture shifts from a fragile, human-dependent funnel to a resilient, high-throughput asynchronous closing loop that protects your profit margins and scales infinitely without adding headcount.

Redefining sales automation as an event-driven state machine

The era of time-delayed, linear drip campaigns is dead. In 2026, relying on arbitrary three-day wait steps to nurture a $10k enterprise deal is engineering malpractice. We must redefine sales automation not as an emotional funnel, but as a deterministic, event-driven state machine. By treating the prospect's journey as a series of discrete state transitions, we eliminate the guesswork of traditional follow-ups and replace it with programmatic queue consumption.

Architecting Discrete State Transitions

Traditional CRMs rely on static stages updated manually by sales reps or triggered by rudimentary time delays. An event-driven model flips this paradigm. The prospect exists in a strictly defined state—such as EVALUATING_API_DOCS or AWAITING_SECURITY_CLEARANCE. Progression only occurs when a specific event payload is ingested, validated, and processed. This means we stop pushing emails based on the calendar and start executing webhooks based on verified user behavior.

To build this, I rely on a robust asynchronous workflow architecture managed via n8n. The core infrastructure requires three distinct layers:

- Event Emitters: Telemetry from your SaaS application, documentation portal, or pricing page that fires a webhook the millisecond a high-intent action occurs.

- Queue Consumers: Message brokers (like Redis or RabbitMQ) that buffer incoming signals to prevent race conditions and ensure sequential processing during high-volume traffic spikes.

- State Mutators: n8n nodes that evaluate the incoming payload against the prospect's current state, triggering the next logical sequence only if the strict transition criteria are met.

Mapping Enterprise Signals to Payload Execution

Closing a $10k deal asynchronously requires mapping micro-buying signals to macro-state changes. If a Director of Engineering spends 14 minutes reviewing your API rate-limit documentation, that is not a generic "lead score" increase; it is a critical, actionable event payload.

We capture this telemetry and pass it to the automation layer as a structured JSON object, such as json { "prospect_id": "usr_892", "event_type": "DOCS_VIEW", "target": "rate_limits", "duration_sec": 840 } . When the state machine ingests this payload, it automatically transitions the account from COLD_AWARENESS to TECHNICAL_EVALUATION.

Instead of a generic "checking in" email, the system instantly dispatches a highly contextual, AI-generated technical brief regarding rate-limit optimization directly to their inbox, bypassing the SDR entirely. By replacing emotional sales funnels with this programmatic, state-based logic, I have consistently seen system response latency drop to <200ms and overall deal velocity increase by over 40%.

Data layer and identity resolution: Architecting the pre-qualification matrix

Constructing the Deterministic Profile

In the 2026 landscape of Sales Automation, relying on probabilistic lead scoring is a guaranteed path to pipeline decay. To engineer an asynchronous closing loop for $10k+ deals, you must construct a deterministic profile of the prospect before a single touchpoint occurs. This requires architecting a pre-qualification matrix that operates entirely in the background, transforming anonymous digital footprints into actionable, high-fidelity identity graphs.

Reverse-IP Lookups and Shadow Intent Pipelines

The foundation of this matrix relies on intercepting traffic and cross-referencing it against global identity graphs. By routing site traffic through an n8n webhook, we trigger immediate reverse-IP lookups. However, basic firmographic enrichment is no longer a competitive advantage. We must layer in shadow intent data—capturing silent buying signals such as recent repository forks on GitHub, aggressive hiring in specific engineering roles, or sudden shifts in a target company's DNS records.

- Reverse-IP Resolution: Maps anonymous IPv4/IPv6 addresses to verified corporate domains, instantly deanonymizing top-of-funnel traffic with a >85% match rate.

- Automated Firmographic Enrichment: Pulls real-time funding rounds, headcount growth, and tech-stack installations directly into the CRM payload.

- Shadow Intent Extraction: Monitors third-party platforms for behavioral anomalies that indicate acute, unvoiced pain points.

Latency Constraints and Real-Time Personalization

Identity resolution is useless if the data cannot be queried fast enough to personalize the asynchronous experience. When a prospect hits your landing page, the payload must be enriched, scored, and injected into the DOM in under 200ms. This requires moving away from bloated relational queries and implementing high-performance data retrieval architectures. By utilizing Redis caching layers alongside optimized vector embeddings, the n8n workflow can instantly evaluate the prospect against the pre-qualification matrix.

If the firmographic data matches the Ideal Customer Profile (ICP), the system dynamically alters the page copy, injects hyper-relevant technical case studies, and unlocks the calendar for a high-ticket closing motion. If the matrix detects a low-fit prospect, they are seamlessly routed to a self-serve or down-sell funnel. This strict, data-driven gatekeeping ensures your sales infrastructure is exclusively processing pre-vetted, high-intent buyers.

Engineering the asynchronous validation loop with n8n and LLM orchestration

The traditional synchronous sales model is fundamentally broken for high-ticket B2B transactions. When analyzing the modern protracted B2B buying journey, the data reveals a critical bottleneck: human latency in the qualification and validation phases. To engineer a scalable closing loop for $10k+ deals, we must transition from manual SDR qualification to deterministic Sales Automation.

Architecting the n8n Signal Router

The asynchronous loop begins the millisecond an inbound signal—be it a complex Typeform submission, a Clearbit-enriched lead payload, or an email thread—hits our webhook. Using n8n, we construct a deterministic routing layer that bypasses traditional CRM bloat. Instead of merely logging a record, the workflow triggers a sequence of API calls to enrich the firmographic data and extract hard constraints like budget, timeline, and technical stack.

This is where advanced n8n orchestration becomes the backbone of the operation. By utilizing conditional switch nodes and HTTP request nodes, we route the enriched payload directly into our AI infrastructure with sub-200ms latency, ensuring the data is perfectly formatted for the next phase of computational reasoning.

Deploying the LLM as a Headless Reasoning Engine

The most common architectural failure in modern growth engineering is treating the Large Language Model as a conversational chatbot. In a high-ticket asynchronous loop, the LLM must operate strictly as a headless reasoning engine. We are not generating polite email copy; we are executing complex logic gates.

When the n8n payload arrives, the headless LLM integration is tasked with three distinct computational objectives:

- Requirement Parsing: Extracting enterprise constraints from unstructured text and mapping them against our predefined service capabilities using strict JSON schemas.

- Preemptive Objection Handling: Cross-referencing the prospect's tech stack and industry against a vector database of historical closed-won deals to identify and neutralize potential friction points before they are verbalized.

- Constraint-Based Structuring: Calculating dynamic pricing and timeline estimates based on the extracted variables, ensuring the output adheres to hard data constraints rather than hallucinated approximations.

Synthesizing the Asynchronous Proposal

Once the reasoning engine completes its validation matrix, the output is passed back to n8n as a structured JSON object. This payload contains the exact parameters required to dynamically generate a highly personalized, asynchronous proposal. By injecting these validated data points into a document generation API, we instantly deploy a bespoke $10k proposal to the prospect's inbox.

This architecture effectively reduces the time-to-proposal from an average of 72 hours to under 45 seconds. By removing human latency from the validation and structuring phases, we force the prospect into a high-velocity asynchronous closing loop, drastically compressing the overall sales cycle while maintaining enterprise-grade personalization.

Designing idempotent APIs for financial and legal commitments

When engineering asynchronous closing loops for $10k deals, network latency is your enemy, but poorly handled retries are fatal. If a webhook times out and your n8n workflow fires twice, you risk double-charging a client $10,000 or generating duplicate, legally binding contracts. In 2026 growth engineering, high-ticket Sales Automation requires absolute transactional safety. This is achieved by architecting idempotent APIs, ensuring that no matter how many times a payload is transmitted, the financial and legal outcome remains identical.

Securing Stripe Webhooks Against Double-Charges

Payment gateways like Stripe operate on distributed systems where webhook delivery is guaranteed at least once, but not exactly once. If your automation layer receives a checkout.session.completed event but fails to return a 200 OK response within 10 seconds, Stripe will retry the transmission. Without idempotency, your system might trigger a second payment capture or provision duplicate backend services.

To prevent this, every API call mutating financial state must include an Idempotency-Key header. In your n8n HTTP Request nodes, dynamically map this key to a deterministic value, such as the unique Stripe Event ID combined with your CRM Deal ID. If the network drops the connection after Stripe processes the charge, subsequent retries bearing the exact same key will simply return the cached 200 OK response without executing a duplicate transaction. This logic reduces critical billing errors to 0% while seamlessly handling high-concurrency closing events.

Deterministic DocuSign Payload Generation

Legal commitments carry the exact same risk profile. Generating a DocuSign envelope programmatically involves compiling dynamic variables—client names, custom pricing tiers, and specific terms—into a base64-encoded PDF payload. If a timeout occurs during the envelope creation request, a naive retry loop will generate a second contract, confusing the client and jeopardizing the deal.

To enforce idempotency in legal workflows, you must leverage the CRM's unique Deal ID as the seed for your signature payload. Before executing the DocuSign API call, the workflow must query your database (or a fast key-value store like Redis) to check if an envelope ID already exists for that specific transaction.

- State Verification: Query the database for an existing

envelope_idmapped to the current deal. - Conditional Execution: If the ID exists, bypass the generation node and proceed directly to the email dispatch sequence.

- Atomic Updates: If the ID does not exist, generate the contract and immediately write the new

envelope_idto the database before closing the HTTP connection.

By treating both financial captures and legal document generation as strictly idempotent operations, you eliminate the catastrophic edge cases of automated closing loops. The result is a resilient architecture capable of processing six-figure daily volumes with sub-200ms latency, ensuring the client experience remains flawless even when underlying network conditions degrade.

Zero-touch operations: Decoupling human interaction from the closing sequence

The 2026 paradigm of Sales Automation dictates a ruthless elimination of human bottlenecks. Pre-AI workflows relied heavily on manual CRM state changes and human-in-the-loop contract generation, creating artificial latency that killed momentum. By engineering a deterministic asynchronous closing loop, we decouple the human entirely from the standard $10k B2B SaaS deal, reducing execution latency from a typical 48-hour human SLA to under 1,200ms.

Architecting the Zero-Touch Closure Path

Deploying a robust zero-touch operations architecture requires moving beyond basic Zapier triggers. We utilize n8n to construct a resilient, multi-stage state machine. When a high-intent signal is captured, the workflow initiates a strict sequence:

- Data Enrichment: Real-time API calls to Apollo or Clearbit append firmographic data to the payload.

- LLM Objection Handling: An Anthropic Claude 3.5 Sonnet node evaluates any inbound friction (e.g., pricing queries) and generates contextually accurate, pre-approved responses.

- Idempotent API Execution: The system fires an idempotent POST request to Stripe and PandaDoc to generate the checkout session and MSA simultaneously.

Idempotency is critical here. If a network timeout occurs, the retry mechanism must not generate duplicate $10k invoices. We enforce this by passing a unique idempotency_key derived from the deal_id and timestamp hash.

Programmatic Edge Case Routing

Not every deal survives the automated loop. Complex procurement requirements, custom legal redlines, or SOC2 compliance inquiries will inevitably break the standard zero-touch path. The engineering challenge is not preventing these edge cases, but programmatically mapping them to trigger minimal human escalation.

We implement a deterministic routing layer using a confidence scoring algorithm. If the LLM node returns a confidence_score < 0.85 or detects specific regex patterns like custom_terms == true, the workflow instantly halts the automated contract generation. Instead of dropping the deal, it constructs an enriched escalation payload:

{

"escalation_tier": "Tier1",

"deal_value": 10000,

"blocker_reason": "CustomMSARedline",

"enriched_context_url": "https://crm.internal/deal/9876"

}

This payload is routed directly to a dedicated Slack channel or Linear queue via webhook. The human operator receives the exact context needed to intervene, resolve the blocker, and manually push the state back into the automated closing sequence. This hybrid approach ensures that 80% of deals close asynchronously, while the remaining 20% receive surgical human intervention, ultimately increasing overall pipeline ROI by over 40%.

Security and edge execution in multi-tenant sales environments

Mitigating Latency with Edge-Deployed Logic Loops

When engineering asynchronous closing loops for high-ticket enterprise deals, network latency is the enemy of conversion. In legacy pre-AI workflows, generating a bespoke 40-page Master Service Agreement required synchronous API calls to centralized servers, often resulting in 30-second delays or catastrophic timeout failures. By 2026 standards, elite Sales Automation demands that we push these logic loops directly to the edge.

Executing contract generation and dynamic pricing algorithms at the network edge drastically reduces the physical distance between the client's browser and the compute node. By leveraging distributed edge computing architectures, we bypass traditional round-trip bottlenecks. When an enterprise prospect triggers a contract generation webhook via an n8n workflow, the payload is processed by a localized edge function. This architectural shift prevents serverless cold starts and reduces execution latency to <200ms, ensuring that complex PDF generation and AI-driven term negotiations complete seamlessly without dropping the connection.

Enforcing Cryptographic Isolation in Multi-Tenant Pipelines

Speed is irrelevant if enterprise data bleeds across tenant boundaries. Handling $10k+ deal flows means processing highly sensitive proprietary data, requiring strict data isolation within your multi-tenant environments. You cannot rely on basic application-level filtering; you must engineer infrastructure-level segregation.

To maintain absolute security for enterprise clients navigating the automated pipeline, we implement account-per-tenant serverless models. This approach guarantees that every asynchronous closing loop operates within its own isolated execution context. We utilize dynamic IAM role assumption and row-level security (RLS) policies injected directly into the n8n execution environment. Consider the following execution parameters:

- Ephemeral Compute: Each contract generation request spins up a dedicated, short-lived container that self-destructs post-execution, eliminating residual memory leaks between tenants.

- Tokenized Payloads: PII and financial data are never passed in plaintext. Workflows utilize AES-256 encrypted payloads, decrypting data strictly in memory at the edge node.

- Namespace Segregation: Database queries triggered by the automation layer are hardcoded to specific tenant namespaces, mathematically preventing cross-tenant data exposure.

By combining edge-level execution with cryptographic tenant isolation, we engineer a closing mechanism that is not only hyper-responsive but fundamentally impenetrable. This dual-layered approach increases enterprise trust, directly contributing to a measurable 40% increase in closed-won ROI for high-ticket automated pipelines.

Scaling MRR: Measuring throughput and latency in your revenue engine

The era of subjective sales metrics is over. We no longer measure "pipeline velocity" or rely on "rep intuition." In 2026, a high-ticket revenue engine is a deterministic, distributed system. To scale Monthly Recurring Revenue (MRR) predictably, you must strip away the human element and evaluate your closing loops using cold, hard engineering metrics.

Redefining Lead-to-Close as System Latency

Traditional sales teams obsess over the "lead-to-close" timeframe, often accepting 30-to-90-day cycles for $10k deals as an immutable law of B2B physics. From a growth engineering perspective, this is simply unacceptable system latency. When you deploy asynchronous Sales Automation, you are effectively replacing synchronous human bottlenecks—like scheduling discovery calls and manually drafting follow-ups—with parallelized AI agents.

In an n8n-driven architecture, latency is measured in the milliseconds it takes for a webhook to parse an inbound intent payload, enrich it via external data APIs, and trigger a personalized, asynchronous closing asset. By treating deal cycles as latency, your optimization goal shifts from motivating sales reps to optimizing API response times, minimizing database query lags, and reducing token generation delays in your LLM nodes. A reduction in system latency directly correlates to accelerated cash flow.

Conversion Rate as System Throughput

Similarly, the traditional "conversion rate" is a legacy artifact. In a fully automated revenue engine, conversion is simply system throughput: the volume of successful, closed-won payloads processed per compute cycle. If your throughput drops, you do not have a subjective messaging problem; you have a packet loss issue in your logic branching or a failure in your state machine.

Scaling MRR in 2026 is no longer about hiring more account executives to dial for dollars. It is purely a function of optimizing system architecture and allocating sufficient compute resources to handle concurrent asynchronous negotiations. When you align your infrastructure with a scalable B2B SaaS pricing architecture, your revenue ceiling is dictated exclusively by server capacity and API rate limits, not human bandwidth.

| Legacy Sales KPI | 2026 Engineering Metric | Optimization Vector |

|---|---|---|

| Lead-to-Close Time | System Latency | n8n webhook execution time, LLM token streaming speed |

| Conversion Rate | System Throughput | Error handling, payload validation, concurrent agent execution |

| Quota Attainment | Compute Utilization | Serverless function scaling, API rate limit management |

By shifting your measurement paradigm, you stop managing personalities and start engineering revenue. You can monitor your closing loops exactly like you monitor your microservices: through logging, tracing, and continuous performance profiling.

The deployment of asynchronous closing loops is not a marketing tactic; it is a structural imperative for survival in the 2026 SaaS ecosystem. Continuing to rely on human intervention for standard $10k transactions guarantees bloated acquisition costs and severe margin degradation. By engineering your sales pipeline as a deterministic state machine, you achieve limitless, zero-touch scalability. Stop treating revenue generation as a biological art form. Treat it as a distributed system. If your enterprise is ready to build a ruthlessly objective revenue engine, schedule an uncompromising technical audit to initiate the infrastructural transition.